Operational Over-Tooling: When Your Stack Becomes the Product

How tool sprawl quietly breaks delivery, on-call, and governance, and the fixes that actually stick.

Friday 4:47 pm. A payment flow is failing. Slack is loud. Someone drops a screenshot of a red dashboard… then another. Then a link to logs. Then a different log tool “because this service ships there”. Traces are in a third place. Alerts are coming from a bot only one person knows how to tune, and they’re away today. Twelve tabs later, you still don’t have a single story of what happened, just fragments, guesses, and rising heart rates.

This is operational over-tooling in the wild. It doesn’t just add licences; it adds load. It turns incident response into a scavenger hunt and makes “moving fast” feel like gambling.

Over-tooling is an architecture anti-pattern because it shifts complexity out of code and into day-to-day operations, where it taxes every release and every outage.

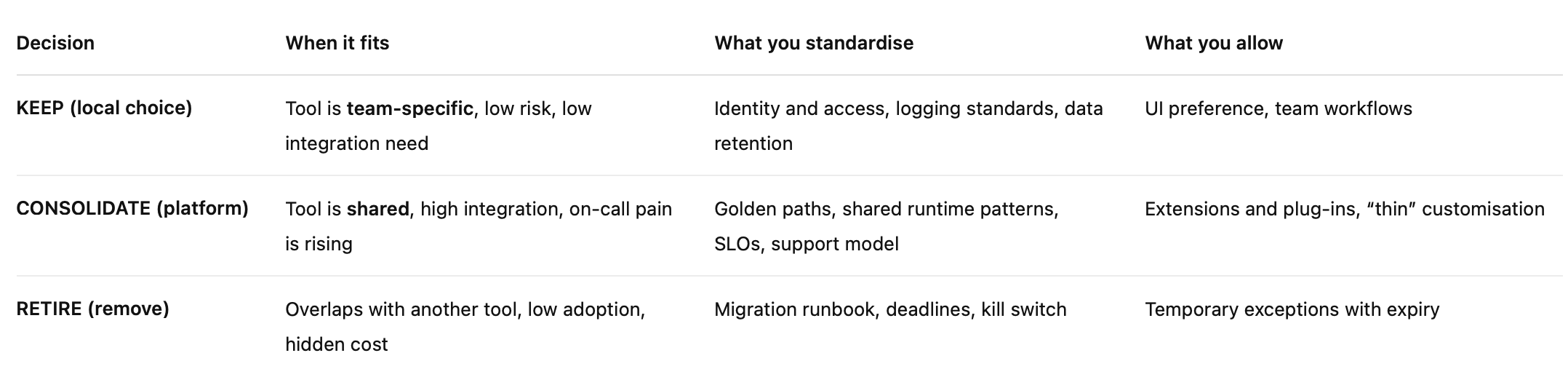

Before you buy “one more tool”, use this decision matrix. It forces the real question: keep, consolidate, or retire and who owns it.

What “operational over-tooling” looks like in real teams

It usually starts with a good reason.

A team ships a new service. They need visibility fast, so they pick a monitoring tool they already know. Another team has a different stack and chooses something else. Security rolls out a scanner “just for coverage”. A platform team adds a CI/CD tool to unblock delivery. None of these choices are reckless. In isolation, they’re sensible.

Then a Friday incident happens and all the sensible choices collide.

You’re in the war room and the questions sound familiar: “Where are the logs for this one?” “Which dashboard is the source of truth?” “Why is the alert firing when the trace looks clean?” Someone shares a link, then another, then another. The team isn’t debugging the payment flow anymore they’re debugging the tooling around the payment flow.

That’s operational over-tooling: when your stack becomes a second system you have to operate before you can operate the product.

The symptoms you can feel (not just measure)

Same job, three tools. Monitoring is split, CI/CD is split, feature flags are split. Everyone has a reason. Nobody has the full picture.

Incidents become a scavenger hunt. Logs live here, traces live there, alerts come from somewhere else, and correlation becomes guesswork.

“Hidden” work becomes the real work. Agents, connectors, pipelines, retention rules, token rotation, webhook retries. It’s not visible on the roadmap, but it eats the roadmap anyway.

Ownership gets blurry. When something breaks, the question isn’t “how do we fix it?” — it’s “who even owns this tool?”

Tooling turns into a career moat. One person can tune the alerting bot, manage the permissions model, or fix the pipeline when it jams. When they’re away, progress slows to a crawl.

The giveaway is emotional, not technical: teams start sounding tired. They stop trusting signals. They default to “ask Sam, he knows the system”. That’s not resilience, that’s dependency.

The root cause (it’s rarely the tool)

This pattern doesn’t exist because teams are bad at picking tools. It exists because the organisation is bad at owning them.

Procurement-by-pain. The last incident hurts, so the next purchase feels urgent. Speed beats design, and the tool ships faster than the operating model.

Local optimisation beats global outcomes. Each team improves their corner, but the company pays the integration and support tax across every corner.

No thin-core standards. Without a few non-negotiables (identity, observability, policy enforcement), every new tool becomes its own mini-platform with its own rules.

Decisions don’t get revisited. Tool choices get made once, then slide into auto-renew. The cost isn’t just the licence, it’s the permanent operational burden.

If you’ve ever watched an incident drift from “what’s failing?” to “which tool do we trust?”, you’ve seen the anti-pattern. And it’s exactly why the decision matrix matters: it forces a clear call to keep, consolidate, or retire before “one more tool” becomes “one more system to run.”

Why it’s an architecture anti-pattern (not a shopping problem)

If this was just a shopping problem, you could solve it with a better shortlist, a sharper RFP, and a hard negotiation. But operational over-tooling keeps coming back because it’s really an architecture and operating-model problem: you’re creating more moving parts than your organisation can reliably run.

It increases operational load faster than delivery speed

Every tool promises “faster delivery”. Then it quietly adds a new slice of operational reality that someone has to own, secure, integrate, monitor, upgrade, and pay for. Multiply that by a few teams, a few years, and a few “temporary” exceptions, and your stack becomes a second product.

Each new tool tends to add:

another auth model (roles, groups, service accounts, break-glass access)

another data model (naming, tagging, schemas, retention rules)

another billing line (renewals, tier creep, usage surprises)

another integration surface (agents, APIs, webhooks, connectors, plugins)

another failure mode (outages, rate limits, misconfig, noisy alerts)

That’s why tool-centric DevOps without the operating model creates failure and fatigue. You don’t just buy software, you buy ongoing work, plus a new way to fail.

It slows incident response

Over-tooling doesn’t usually break the system directly. It breaks the story of the system.

When telemetry is split across tools, teams lose the ability to correlate signals quickly: the alert fires in one place, the logs are somewhere else, traces are incomplete, and dashboards disagree. Time-to-detect stretches because nobody trusts the first signal. Time-to-recover stretches because you’re stitching together fragments under pressure.

The result looks like this: more Slack messages, more screenshots, more “can someone check X?” and less actual diagnosis.

It makes governance either heavy or useless

Tool sprawl pushes governance into two bad extremes.

Heavy governance: endless approvals and architecture reviews because nobody trusts the stack’s risk profile anymore. Every tool request becomes a debate about identity, data access, auditability, and support because those basics aren’t standardised.

Useless governance: “teams can choose anything” until a breach, audit finding, or major outage forces a reset. Then you swing back to heavy governance, usually with a blunt consolidation program and a lot of resentment.

A healthy setup sits in the middle: a thin core of standards, clear ownership, and a simple decision loop. Without that, you’re not managing tools, you’re managing chaos with invoices attached.

The “keep vs consolidate” decision framework

Here’s the mistake most organisations make: they treat every tool decision like a feature decision. One team has a need, they pick a tool, and the business moves on. That works right up until the tool becomes shared infrastructure, then you’re no longer choosing a product, you’re choosing an operating burden.

So instead of arguing “Tool A vs Tool B”, make the first decision simpler: is this a local choice, or does it belong on the platform? Once you agree on the lane, the vendor discussion becomes much easier (and a lot less political).

Scannable checklist (copy/paste ready)

A tool can be a local choice only if all of these are true:

No customer data classification surprises

It doesn’t store, process, or expose sensitive data in ways that will bite you later.

No privileged access outside standard IAM

It uses your normal identity patterns (SSO, MFA, least privilege). No rogue admin accounts.

No dependency in incident response

If the tool is down, you can still debug production. It’s helpful, not required.

Can be replaced inside a sprint

Low switching cost. If you had to rip it out, you wouldn’t need a six-month program.

Has a named owner and a clear exit plan

Someone is accountable for cost, security, and lifecycle, and you already know how you’d leave.

If any answer is “no”, it goes into the platform lane. That means: standardise it, support it properly, and make it part of the “thin core” rather than another optional add-on.

And yes, this ties straight back to the matrix at the top: local choice = keep, platform lane = consolidate, and anything that fails both (duplicate, low value, no owner) is a retire candidate.

Relatd Artciles:

Fix pattern 1: Build a thin core, then give teams freedom above it

The fastest way to shrink tool sprawl without starting a civil war is to separate standards from preferences. Most teams don’t actually want unlimited choice. They want freedom where it helps delivery and consistency where inconsistency hurts.

That’s the “thin core + autonomy above” move: lock down the few foundations that make systems safe and operable, then let teams innovate on top without reinventing the basics every time.

The non-negotiables (thin core)

These are the pieces that should feel boring because boring is reliable:

Identity and access patterns

SSO, MFA, least privilege, consistent service account handling, break-glass rules.

Observability standards

Common fields, correlation IDs, agreed retention, and one standard pipeline for logs/metrics/traces.

Deployment and rollback guardrails

Release patterns, rollback expectations, feature toggle rules, and what “safe deploy” means here.

Policy enforcement points

Where controls live: gateway for ingress policy, runtime for service controls, CI for build-time checks.

This pattern shows up again and again because it reduces sprawl without freezing delivery. Teams can still move quickly, they’re just moving quickly on rails instead of inventing a new train track each sprint.

What autonomy actually means (examples)

Autonomy isn’t “do whatever you want”. It’s “choose freely inside safe boundaries”.

Teams can choose UI dashboards, but logs must land in the standard pipeline with the standard fields.

Teams can choose feature flag products, but kill switches follow one pattern (naming, ownership, where they’re documented).

Teams can experiment, but experiments expire unless adopted (time-boxed, owned, and reviewed).

The goal isn’t to win an argument about tools. It’s to make sure every tool choice doesn’t quietly become a company-wide dependency.

Fix pattern 2: Platform engineering and an Internal Developer Platform (IDP)

If thin core is the rules, an IDP is the shortcut.

Most tool sprawl happens because teams are trying to assemble a working delivery and operations experience from scratch: build, deploy, observe, secure, repeat. An Internal Developer Platform bundles those capabilities into something that’s supported, consistent, and easy to adopt.

What an IDP does (plain English)

Provides “golden paths” that make the right thing the easy thing

Create a service, get standard logging, safe deploy, sensible alerts, and a runbook template without begging three teams.

Reduces tool sprawl by packaging a supported set of capabilities

Build, deploy, and observe with defaults that work and integrations that are already done.

Treats developer experience as a product

There’s a roadmap, a support model, clear ownership, and feedback loops, not a pile of scripts nobody owns.

The trap to avoid

Don’t build a “platform of platforms”

If your platform needs a platform team to operate it, you’ve recreated the problem with a nicer logo.

Keep it small, opinionated, and measured by adoption

Adoption is the score. If teams avoid it, it’s not a platform, it’s a side project.

Fix pattern 3: A lightweight governance loop that prevents relapse

Even with a thin core and a platform, sprawl returns if decisions don’t have a lifecycle. The fix isn’t heavy approvals. It’s a simple loop that keeps visibility and ownership alive.

The Tooling Decision Record (TDR) in one page

Think of this as the smallest artefact that stops “we bought it because reasons”.

Include:

What problem are we solving?

Who owns it? (service owner + platform owner)

What is the exit plan?

What data and access does it touch?

What is the success measure? (adoption, incident reduction, cost)

If the TDR can’t be written clearly, that’s usually a signal the tool choice isn’t clear either.

Quarterly rationalisation cadence (30 minutes per domain)

This isn’t a big program. It’s maintenance — like brushing your teeth.

Keep / consolidate / retire review

Licence true-up

Security review refresh

Decommission plan for “retire”

This matches what actually works in sprawl governance: visibility, ownership, regular review, and renewal control without turning every request into theatre.

Fix pattern 4: Use AI to reduce friction, not to multiply tools

AI can either cut your operational overhead or become the newest category of sprawl. The difference is whether AI is used to simplify workflows or to introduce new platforms with new permissions, new data paths, and new vendors.

Where AI helps immediately

Draft TDRs from a template (problem statement, risks, owners, exit plan)

Generate migration runbooks and test checklists (so consolidation doesn’t stall)

Summarise incidents across Slack + tickets + telemetry (faster shared understanding)

Where AI makes sprawl worse

“AI tool of the week” procurement

Every team trialling a different assistant with a different data policy.

Multiple copilots with different permissions and data paths

Now you’ve got duplicated tooling and a mess of access, retention, and compliance questions.

Use AI like power steering, not a new engine. If it makes your stack bigger, noisier, or harder to govern, it’s not helping; it’s just moving the burden somewhere else.

A practical 30/60/90-day plan (doable, not heroic)

You don’t fix tool sprawl with a big-bang program and a six-month steering committee. You fix it by stopping new sprawl, consolidating one capability end-to-end, then locking in a lightweight loop so you don’t slide back.

First 30 days: stop the bleeding

Your goal in the first month is simple: no more surprise tooling.

Freeze new tools in the platform lane unless a TDR exists

If a tool affects identity, customer data, or incident response, it needs a one-page Tooling Decision Record. No TDR, no new dependency.

Inventory the top 20 tools by pain, not by popularity

Rank them by:

cost (licence + hidden support effort)

access risk (privileged roles, data exposure)

incident dependency (do you need it to debug production?)

Pick two consolidation targets

Start with the two areas that create the most fragmentation:

observability (logs/metrics/traces/alerts)

CI/CD (pipelines, releases, rollback patterns)

Keep it focused. Two targets is realistic. Ten targets is theatre.

60 days: consolidate one lane

Now you do one consolidation properly. Not “we chose a tool”. Real consolidation: standards, migration, ownership.

Choose the standard for one capability

Example: logging + tracing correlation. Define the required fields, correlation IDs, and the source of truth pipeline.

Migrate the highest pain services first

Don’t start with the easiest. Start with the services that:

page people most often

block incident response

have the messiest “where do I look?” story

That’s how you earn trust fast.

Publish golden path docs and a support model

One page that answers:

how to onboard a service in under an hour

what’s supported (and what’s not)

who to contact when it breaks

If teams can’t adopt it quickly, they won’t adopt it at all.

90 days: lock in governance

By day 90, you want the system to stay clean without constant heroics from you.

Run a quarterly rationalisation meeting

30 minutes per domain: keep, consolidate, retire. Decisions recorded. Owners assigned.

Require a TDR for renewals

This is where you win. Tool sprawl often survives because renewals are automatic. Make renewals earn their place.

Make exceptions expire unless renewed

Every exception has an end date. If it’s still valuable, renew it with a fresh TDR. If not, it disappears quietly, which is exactly what you want.

The aim isn’t to have fewer tools for the sake of it. It’s to have fewer operational stories your team needs to remember at 4:47 pm on a Friday.

The takeaway

Back to Friday 4:47pm. The payment flow didn’t fail because the team lacked tools. It failed because, in the moment that mattered, nobody could see a single, trusted story. The signals were scattered, the dashboards disagreed, and the “one person who knows the bot” wasn’t online. That’s the real cost of over-tooling: not licences, but minutes and mental load when pressure is high.

Here’s the truth in one line: the fix is less about tools, more about ownership and boundaries. Your best engineers shouldn’t be doing archaeology across dashboards.

If you want architecture playbooks you can run on Monday, subscribe to my newsletter.